Artificial intelligence: Difference between revisions

Amwelladmin (talk | contribs) No edit summary |

Amwelladmin (talk | contribs) No edit summary |

||

| Line 1: | Line 1: | ||

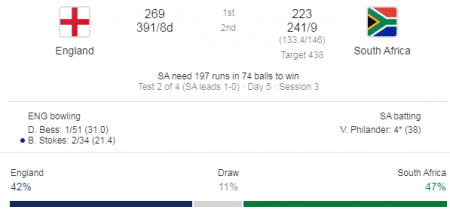

{{a|tech|}}That first great contradiction in terms. The [[algorithm]] which observes that you bought sneakers on Amazon last week, and concludes that carpet-bombing every unoccupied cranny in your cyber-landscape with advertisements for the exact trainers [[Q.E.D.]] you no longer need, is an effective form of advertising. | {{a|tech|[[File:Cricket prediction.png|450px|thumb|center|''Why'' your job is safe: machines might whup Alpha Go masters, but they don’t understand [[cricket]].]]}}That first great contradiction in terms. The [[algorithm]] which observes that you bought sneakers on Amazon last week, and concludes that carpet-bombing every unoccupied cranny in your cyber-landscape with advertisements for the exact trainers [[Q.E.D.]] you no longer need, is an effective form of advertising. | ||

Also, the technology employed by social media platforms like [[LinkedIn]] to save you the bother of composing your own unctuous endorsements of people you once met at a [[business day convention]] and who have just posted about the wild old time they've had at a [[panel discussion]] on the operational challenges of regulatory reporting under the [[securities financing transactions regulation]]. | Also, the technology employed by social media platforms like [[LinkedIn]] to save you the bother of composing your own unctuous endorsements of people you once met at a [[business day convention]] and who have just posted about the wild old time they've had at a [[panel discussion]] on the operational challenges of regulatory reporting under the [[securities financing transactions regulation]]. | ||

Revision as of 15:30, 7 January 2020

|

JC pontificates about technology

An occasional series.

|

That first great contradiction in terms. The algorithm which observes that you bought sneakers on Amazon last week, and concludes that carpet-bombing every unoccupied cranny in your cyber-landscape with advertisements for the exact trainers Q.E.D. you no longer need, is an effective form of advertising.

Also, the technology employed by social media platforms like LinkedIn to save you the bother of composing your own unctuous endorsements of people you once met at a business day convention and who have just posted about the wild old time they've had at a panel discussion on the operational challenges of regulatory reporting under the securities financing transactions regulation.

The practical reason your jobs are safe

Is that, for all the wishful thinking (blockchain! chatbots!) the “artificial intelligence” behind reg-tech at the moment just isn't very good. Oh, they'll talk a great game about “natural language parsing” and “tokenised distributed ledger technology” and so on, but bear in mind that what is going on behind the hood is little more than a sophisticated visual basic macro. A lot of the magic of the world-wide web really isn’t, technologically, that sophisticated. Information retrieval is really a no more than devising a basic metadata schema and hey — even muggins like the Jolly Contrarian can do that (how do you think this wiki works?). Actually parsing natural language and doing that contextual, experiential thing of knowing that, yadayadayda boilerplate but whoa hold on, tiger we’re not having that isn’t the kind of thing a startup with a .php manual and a couple of web developers can develop on the fly. So expect proofs of concept that work ok on a pre-configured confidentiality agreement in the demo, but will be practically useless on the general weft and warp of the legal agreements you actually encounter in real life — as prolix, unnecessary and randomly drafted as they are.

The thing is, there is some genuinely staggering AI out there, but it ain’t in regtech — it is in the music industry. The AI drummer on Apple’s Logic Pro. That’s amazing, and that really is putting folks out of work. Likewise Izotope’s mastering plugins.

If only Izotope realised how much money there was in regtech, they wouldn't be faffing around with hobbyist home recording types like yours truly.

The actual reason your jobs are safe

More particularly, why artificial intelligence won’t be sounding the death knell to the legal profession any time soon. Because Computer language isn’t nearly as rich as human language. It doesn't have any tenses, for one thing. In this spurious fellow’s opinion tenses, narratising as they do a spatio-temporal continuity of existence that we have known since the time of David Hume cannot be deduced or otherwise justified on logical grounds, is the special sauce of consciousness, self-awareness, and therefore intelligence. If you don’t have a conception of your self as a unitary, thinking thing, though the past, at present and into the future, then you have no need to plan for the future or learn lessons from the past. You can’t narratise.

Machine language deals with past (and future) events without using tenses. All code is rendered in the present tense: Instead of saying:

- The computer’s configuration on May 1, 2012 was XYZ

Machine language will typically say:

- Where <DATEx> equals “May 1 2012”, let <CONFIGURATIONx> equal “XYZ”

This way a computer does not need to conceptualise itself yesterday as something different to itself today, which means it doesn’t need to conceptualise “itself” at all. Therefore, computers don’t need to be self-aware. Unless computer syntax undergoes some dramatic revolution (it could happen: we have to assume human language went through that revolution at some stage) computers will never be self-aware.

It can’t handle ambiguity

Computer language is designed to allow machines to follow algorithms flawlessly. It needs to be deterministic — a given proposition must generate a unique binary operation with no ambiguity — and it can’t allow any variability in interpretation. This makes it different from a natural language, which is shot through with both ambiguity and variability. Synonyms. Metaphors. Figurative language. All of these are incompatible with code, but utterly fundamental to natural language.

- It is very hard for a machine language to handle things like “reasonably necessary” or “best endeavours”.

- Coding for the sort of redundancy which is rife in English (especially in legal English, which rejoices in triplets like “give, devise and bequeath”) dramatically increases the complexity of any algorithms.

- Aside from redundancy there are many meanings which are almost, but not entirely, the same, which must be coded for separately. This increases the load on the dictionary and the cost of maintenance.

The ground rules cannot change

The logic and grammar of machine language and the assigned meaning of expressions is profoundly static. The corollary of the narrow and technical purpose for which machine language is used is its inflexibility: Machines fail to deal with unanticipated change.

Infinite fidelity is impossible

There is a popular “reductionist” movement at the moment which seeks to atomise concepts with a view to untangling bundled concepts. The belief is that by separating bundles into their elemental parts you can ultimately dispel all ambiguity. A similar attitude influences contemporary markets regulation. This programme aspires to ultimate certainty; a single set of axioms from which all propositions can be derived. From this perspective shortcomings in machine understanding of legal information are purely a function of a lack of sufficient detail the surmounting of which is a matter of time, given the collaborative power of the worldwide internet. The singularity is near: look at the incredible strides made in natural language processing (Google translate), self-driving cars, computers beating grand masters at Chess and Go.

But you can split these into two categories: those which are the product of obvious (however impressive) computational feats - like Chess, Go, Self-driving cars, and those that are the product of statistical analysis, so are rendered as matters of probability (like Google translate).

If their continued existence depended on its Chess-playing we might commend our souls to the hands of a computer (well - I would). It won’t be long before we do a similar thing by getting into an AI-controlled self-driving car - we give ourselves absolutely over to the machine and let it make decisions which, if wrong, may kill us. But its range of actions are limited and the possible outcomes it must follow are obviously circumscribed - a single slim volume can comprehensively describe the rules with which it must comply (the Highway Code). Outside machine failure, the main risk we run is presented not by non-machines (folks like you and me) behaving outside the norms the machine has been programmed to expect. I think we'd be less inclined to trust a translation.

- there is an inherent ambiguity in language (which legal drafting is designed to minimize, but which it can’t eliminated.

See also

- The Singularity which may or may not[1] be near

- Rumours of our demise are greatly exaggerated

- On machine code and natural language

References

- ↑ Spoiler: Is not.